|

11/28/2023 0 Comments Airflow dag dependencySo, Default Arcs is a dictionary that we pass to the Airflow object and it contains some metadata. In the upcoming videos, we will be using more advanced operators and write more complicated workflows. For now, I will be using a dummy operator, which, as the name suggests, does nothing. In order to define task, we will be using operators. Next is from we will be importing an operator. From date, time, import date, time and something called as Time Delta. Next thing is that we need to import some modules related to date and time. The first thing that we need to import is from Airflow import DAG. Let’s start with the step one, right? The step one is importing modules. Bring up your favorite text editor and let’s get started. And fifth, we’ll define our dependencies. The second is defining default arguments.

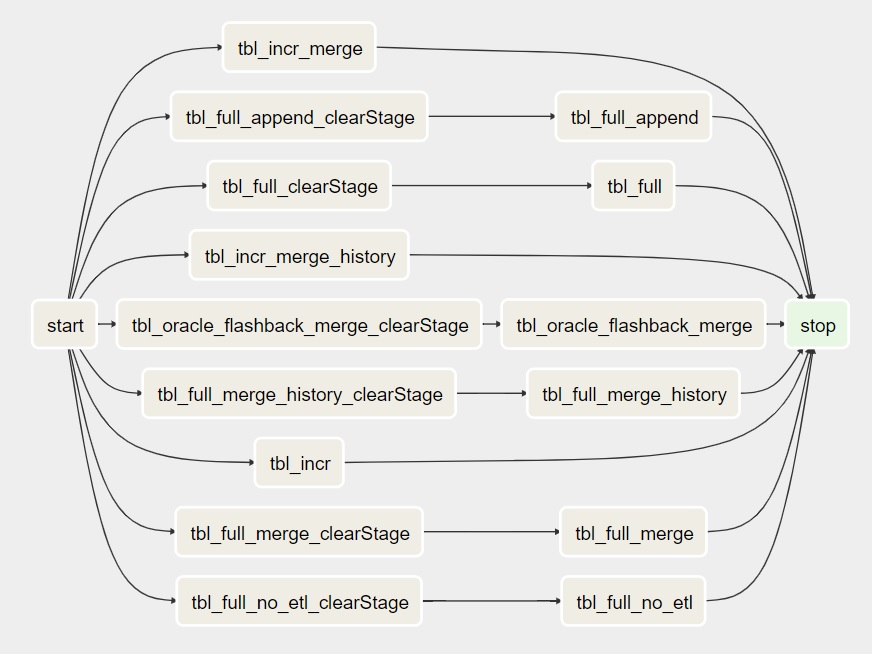

Of writing a DAG file into five smaller steps. Now let’s talk about how to define a DAG. Tag runs and task instances is clear to you all. If I again trigger it, I’ll have two diagrams, right? So I hope that the concept of. We can see that this one is Already completed and the restaurant are running. We have these three diagrams and these are the task instances corresponding to them. If we want to see all these task instances, we can just switch over to this preview and it will give us the list of all the task instances corresponding to all the diagrams. It tells me that this DAG has three running instances and we have multiple task instances. If I trigger it again, it now has three diagrams. Right now we have one diagram and these are the task instances, right? If I just trigger this DAG again, it has two diagrams. About what these operators do, but we’ll talk about all of these in the upcoming videos. Here we can see that it has some python branch, python operator, and dumbing operator. This is the graph view, and you.Ĭan see that we have multiple tasks. Task instances belong to DAGgers and tasks. If we have a DAG run, a task and a point in time, we can define a task instance. A task instance is a runnable entity of a task and it is run of a task in a point of time. Similarly, I would like to talk about task instances. We will talk more about what operator, sensor or hooks are in the upcoming videos. On a top view, those all are classified as operators. Airflow TasksĮach task can be defined using operator, sensor or hook. Let’s talk about take a note in the DAGs represents a task and tasks are the unit of work in Airflow. A DAG can have multiple diagrams at any given point of time. A diagram can either be created by the scheduler or you can manually trigger a DAG to create its diagram. It is a metadata entry in the database that tells us how many times a DAG has run.

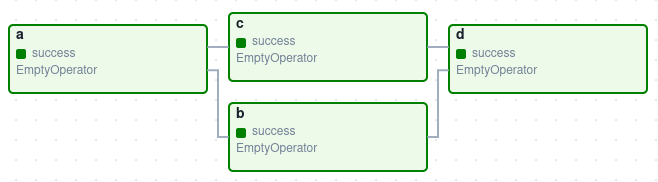

Another thing I would like to talk about is diagram. In Airflow web server we have two tasks dummy start and dummy end. In Airflow, a DAG is a collection of tasks with defined dependencies and properties and we define them using Python programming language. Let’s talk about DAGs with respect to Airflow. There are no cyclic dependencies and this is what we want in our workflow. If I start from here, now I’m here and this is the end, right? I can start from here and I’ll reach here. We don’t want cyclic dependencies in our workflows. For example, in this directed graph, if I follow this path, I go here, here and you can see I am stuck in a cyclic dependency. Now, directed a cyclic graph, it is self explanatory name is a graph which has no cyclic dependencies.

We can see that there is a directed edge from this node to this node. Directed graph, as the name suggests, has directed edges. In mathematics, graph is something which has nodes and edges. In mathematics, graph is let me take the pointer. You see what I did there? I made a workflow. In order to know about directed graphs, we need to know what is a graph. In order to learn about DAGs, we need to know what are directed graphs.

Let’s start with directed acyclic graphs or DAGs. Also, I will show you guys as how to define a DAG file and write your own DAG. In this episode we will talk about DAGs and tasks which are the building blocks of any workflow. Till the last episode we have discussed what and why Apache Airflow and a brief overview of the architecture.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed